In case you missed it, last week we talked about the differences between humans and machines through the lens of decision making. This week we’ll return to some classics!

This week:

🪄 Topic: What optical illusions can tell us about our biases and heuristics

🧠 Bias 6/50: Confirmation Bias

🛠️ Tool: Decision Types

What optical illusions can tell us about our biases and heuristics

For the first week of this series, we talked about blind spot bias as a surprisingly difficult concept to grasp. Believing we have any control over the biases and heuristics that influence our judgment does more harm than good.

This is why many argue that ‘bias education’ is a hollow pursuit. That in many cases, the awareness of biases can have an adverse effect. If someone believes their education makes them less biased, the blind spot is much stronger.

This leads us back to one of the Blueprint’s principles: We can not debias individuals, but we can adjust for biases in teams. As Olivier Sibony and Daniel Kahneman argue in their book, ‘Noise: A Flaw in Human Judgement’, There are techniques that help organizations systematically adjust for deviations in human judgment.

“Bias and noise—systematic deviation and random scatter—are different components of error.”

But, as they go on to argue, these frameworks for judgment we’ve spent our lives building through experiences, shape our uniqueness. They’re the reason we disagree. They’re the reason that out of our conviction and dissent comes progress.

In order to arrive at the conclusion that this is a systemic problem, not an individual problem, we have to illustrate how, in this case, seeing is not believing.

Cognitive biases are much like optical illusions

Biases act like optical illusions. Even if we see or understand the illusion, we can’t necessarily control it.

When talking to teams about the importance of systematic approaches to combat bias, it’s helpful to walk through a few popular illusions and riddles to illustrate how these work.

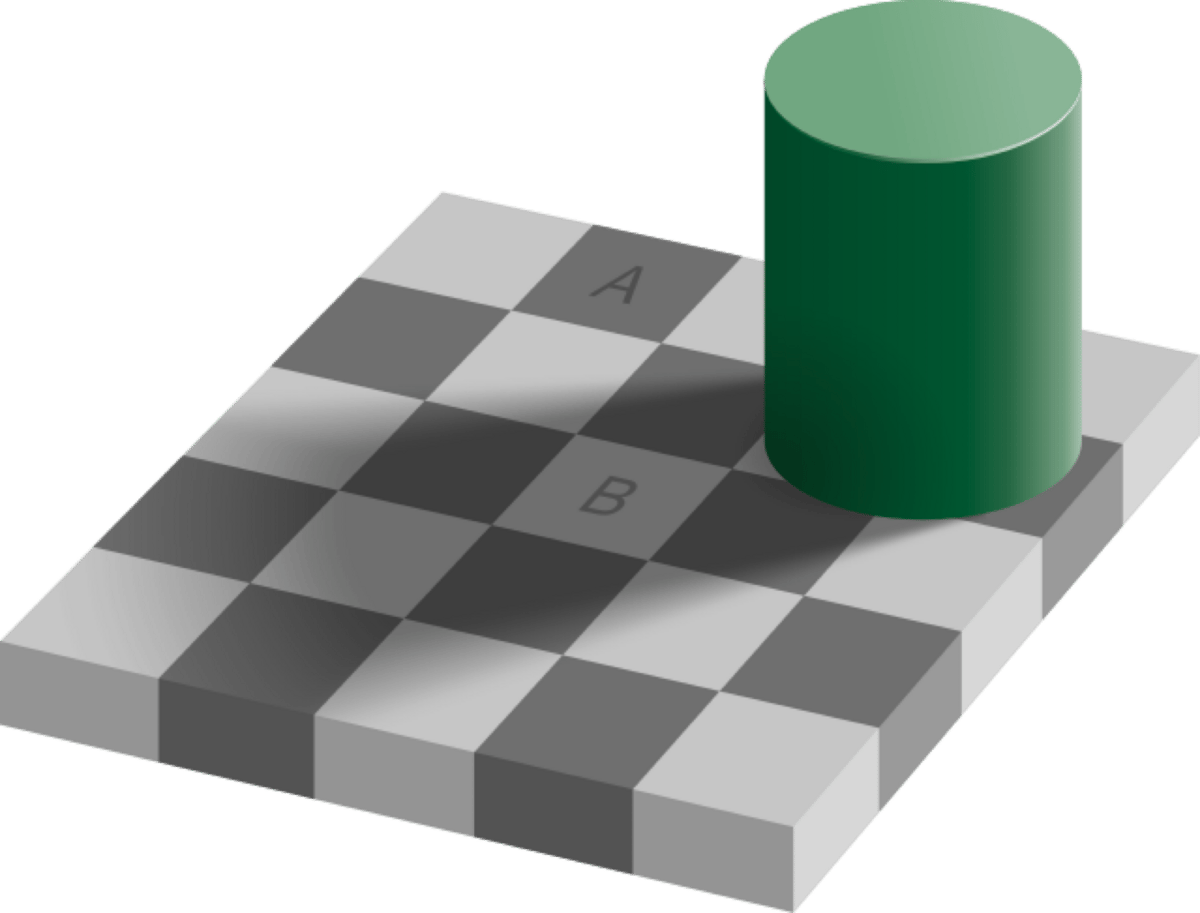

Here we have a classic visual illusion that shows how our brain manipulates our perception to see depth:

Looking at the A square and the B square… are they the same color? They look completely different. One looks almost objectively darker than the other.

But when we connect the squares, the illusion becomes clear. The squares are the exact same colors.

The illusion is so obvious when we put them together, but when we still look back at the original image, we still can’t definitively say they’re different.

This is an important, often overlooked, reality of biases and heuristics. Much like visual illusions, once we ‘see’ our ‘cognitive illusions’, things aren’t suddenly clear. Much like looking back at the original image, we’re not just automatically enlightened that the colors are clearly the same.

We remain blind to these illusions.

With the shadow example, we all had the same experience - but our cognitive illusions often vary based on individual perception. They’re relative to our experiences, existing beliefs, preferences, etc…

Remember this viral picture of a dress?

People tend to see two very different colors - they describe the dress as either ‘gold and white’ or ‘black and blue’. Don’t believe it? Ask your friends!

This went viral years back because people couldn’t believe that others saw such drastically different colors. People are typically shocked that others don’t see the dress the same way they do. It feels objective.

Years later, a few different papers studied the viral phenomenon of the dress.

The explanation is not about what we see, but what we assume. Each person who looks at this image reasons their way into assumptions about the environment the dress is in. Is it in a well-lit store? Is it in a dark closet?

Once our brains pre-determine where the dress is, it signals a perception of color (either blue/black or white/gold).

“The perceived colors of the dress are due to (implicit) assumptions about the illumination…

Moreover, our results provide some evidence that prior experience with disambiguated images may push observers to interpret the ambiguous original photo in one or the other way. These observations suggest that the perception of the dress colors may be modulated by biasing observers toward one or the other interpretation of the photo.”

What’s interesting about this particular phenomenon is that it’s incredibly difficult for someone to ‘unsee’ the color they see. So I see blue and black - it’s almost impossible for me to see white and gold.

These researchers found that in scenarios where they primed participants, they were able to influence what people see. In this study, showing participants altered images (that clearly pre-determined the color of the dress) prior to exposing them to the original image, modified their perspective.

This is much like how biases and ‘information cascades’ work. They are relative to our experience and unwinding the reasoning that leads to our perceptions is incredibly difficult.

By trying to change how we see the original image (knowing that it’s possible to see something completely different), we can see how strong this illusionary reasoning is - and how it’s difficult to change, even if we try.

Bias 6/50: Confirmation Bias

“The lesson the researchers learned from all this, as they wrote in the introduction to When Prophecy Fails: ‘A man with a conviction is a hard man to change.’”

How to combat confirmation bias

It’s convenient to think that smart people with high-level reasoning skills wouldn’t fall prey to these effects, but unfortunately, intelligence isn’t the cure. Research suggests intelligence does not correlate to the impact of confirmation bias. In fact, others argue it may exaggerate confirmation bias.

As David Robson writes in his book, The Intelligence Trap…

“Intelligent and educated people are less likely to learn from their mistakes, for instance, or take advice from others. And when they do err, they are better able to build elaborate arguments to justify their reasoning, meaning that they become more and more dogmatic in their views. Worst still, they appear to have a bigger "bias blind spot," meaning they are less able to recognize the holes in their logic.”

Below are a few tools and frameworks that can help combat the effects of confirmation bias.

Question and criticize our own beliefs over others

We’ve all had the thought “How does that person even think that?!”. It’s easy for us to criticize others’ thinking — especially in hindsight.

We can build a muscle for questioning ourselves more than others. Daniel Kahneman recommends actively seeking ‘surprises’ - reframing information that disproves beliefs to information that updates them.

“You are more likely to learn something by finding surprises in your own behavior than by hearing surprising facts about people in general.”

Foster dissent and build an environment with diverse thinking, backgrounds, and experiences

It’s easy to throw rocks at someone else’s glass echo chamber, but we are all wired to do the exact same thing.

We favor opinions that agree with us and give more merit to people we like. It’s human — we seek approval and acceptance over almost everything else.

Increasing disagreeableness doesn’t mean we need to build a negative culture of fighting and friction, but complacency is dangerous. Teams need to have a level of disagreeableness

Treat a team’s information intake like a healthy diet

Receiving information and feedback that supports our existing beliefs is like eating junk food. It feels good to be told we’re right. Our brain will naturally indulge in the information that supports our existing beliefs.

Welcome counterarguments and actively include people who have different opinions. It doesn’t come naturally. Red teaming is an interesting way to do this systematically.

Track, revisit, and adapt our beliefs

In environments of extreme uncertainty, our decisions depend on our ability to shape our beliefs. The cadence at which we’re able to revisit and adapt our beliefs, the better we are at updating our decision making model.

We might feel like this happens naturally, but it doesn’t. If we aren’t intentionally revisiting and adapting our beliefs to drive our decisions, we end up on our heels with an outdated, inaccurate model driving our decisions.

Tool: Decision Types

This method may be very familiar, but it feels like it needs to be covered.

Made popular by Jeff Bezos and Amazon in his 2015 letter to shareholders, this technique involves identifying two types of decisions: Type 1 and Type 2.

Type 1: Also known as one-way door decisions, these are irreversible and have long-term consequences. These are the decisions that set the direction and strategy of the company, such as opening a new fulfillment center or acquiring another company. These decisions are made slowly and deliberately, with a great deal of consideration and analysis.

Type 2: Also known as two-way door decisions, these are reversible and have short-term consequences. These are the day-to-day operational decisions, such as deciding on a new product feature or how to handle a customer service issue. These decisions are made quickly, with less analysis and more experimentation.

Type 1 decisions should be made slowly and deliberately, while Type 2 decisions should be made quickly and with a bias towards action. This system allows for a balance of cautious long-term planning and flexible short-term execution.

This decision making system is useful because it helps to not get bogged down in endless analysis paralysis and indecision.

Additional dimensions

We can build on these decision types to bring more nuance to the ‘speed and urgency’ model to understand when to address a decision and how long that decision should take.

Use these dimensions with decision types to build your own models for decision triage, prioritization, and delegation.

Consequence of decisions

One dimension decision types don’t cover is the consequence of a decision. A one-way door decision that is relatively inconsequential would be handled differently than the same decision type with a higher consequence.

As Shane Parish at Farnam Street highlights, the definition of ‘consequential’ and ‘inconsequential’ is relative to you, your team, or your business. Something that might be inconsequential to you, maybe consequential to others.

For that reason, be explicit when defining what these terms mean and propose the action that needs to be taken when decisions fall in their respective quadrants.

Cost of reversibility

The decision types themselves are binary - either a decision is reversible (two-way door) or it isn’t (one-way door). It fails to incorporate the dimension of cost. Between two reversible decisions, there are often varying levels of cost to reverse the decisions. One decision may just be lost time or opportunity cost, while the other may require costly work to unwind back to the starting point.

Adding the dimension of cost may be represented as a general label (e.g. high, medium, low options), a relative scoring method (e.g. 10 for high cost, 1 for low cost), or as true monetary cost.

For one-way door decisions, this is much trickier. Based on their definition, we may assume that they are truly irreversible and therefore there’s no need to define the cost of reversibility, but Annie Duke suggests the method of ‘decision stacking’.

Decision stacking is the method of breaking down a potentially complex one-way door decision into smaller, less costly decisions. This exercise may surface reversible decisions, but at the very least, provides checkpoints or tripwires to revisit a one-way door decision before it becomes more costly.

Delegation, escalation, automation

When categorizing decision types, there’s an opportunity to continuously build a strategy for future decisions. With each new decision, the team can calibrate when decisions should be delegated or escalated and identify repeated decisions that can be automated.

For decisions that are delegated: Could we have delegated this decision sooner? Is there a systematic way to determine who (or what team) this type of decision delegates to?

For decisions that are escalated: Could we have escalated this decision sooner? Which decisions should particular individuals make on their own vs decisions that are escalated?

For repeating decisions: Could this decision be automated? What information would we need to automate this decision? How much would it cost to automate this decision?

Continuously building this rubric helps calibrate the triage to help the team only focus energy on decisions that are important and relevant in the long term - especially to avoid the tendency to create unnecessary processes.

“As organizations get larger, there seems to be a tendency to use the heavy-weight Type 1 decision-making process on most decisions, including many Type 2 decisions. The end result of this is slowness, unthoughtful risk aversion, failure to experiment sufficiently, and consequently diminished invention. We’ll have to figure out how to fight that tendency.” — Jeff Bezos